from allennlp.commands.elmo import ElmoEmbedder' not work even in 0.9.0 · Issue #5203 · allenai/allennlp · GitHub

Improving a Sentiment Analyzer using ELMo — Word Embeddings on Steroids – Real-World Natural Language Processing

Training ELMO from Scratch on Custom Data-set for Generating Embeddings: Tensorflow | Machine Learning in Action

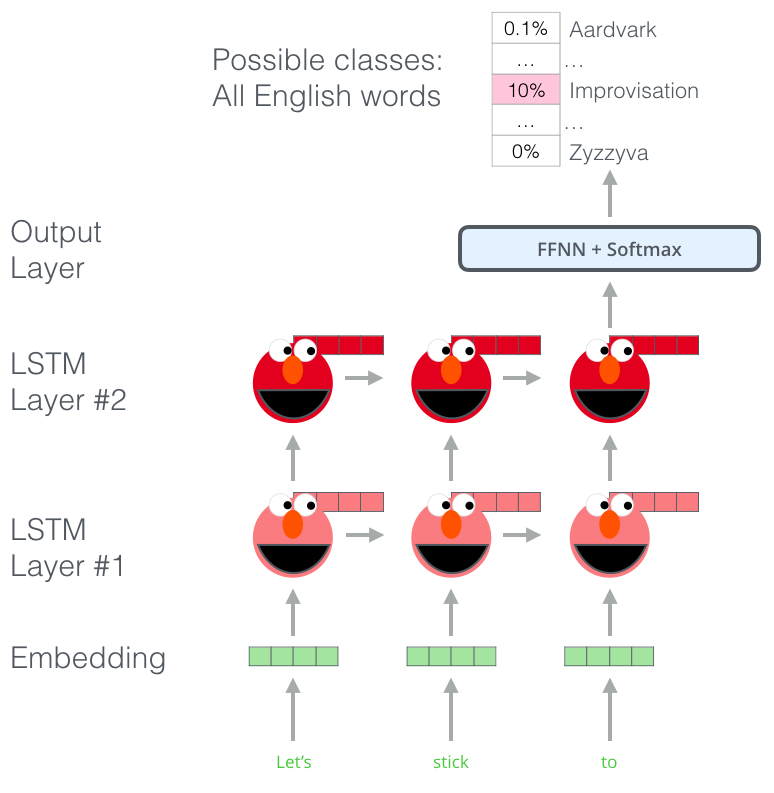

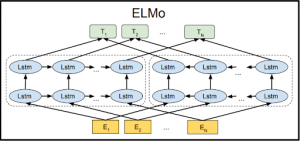

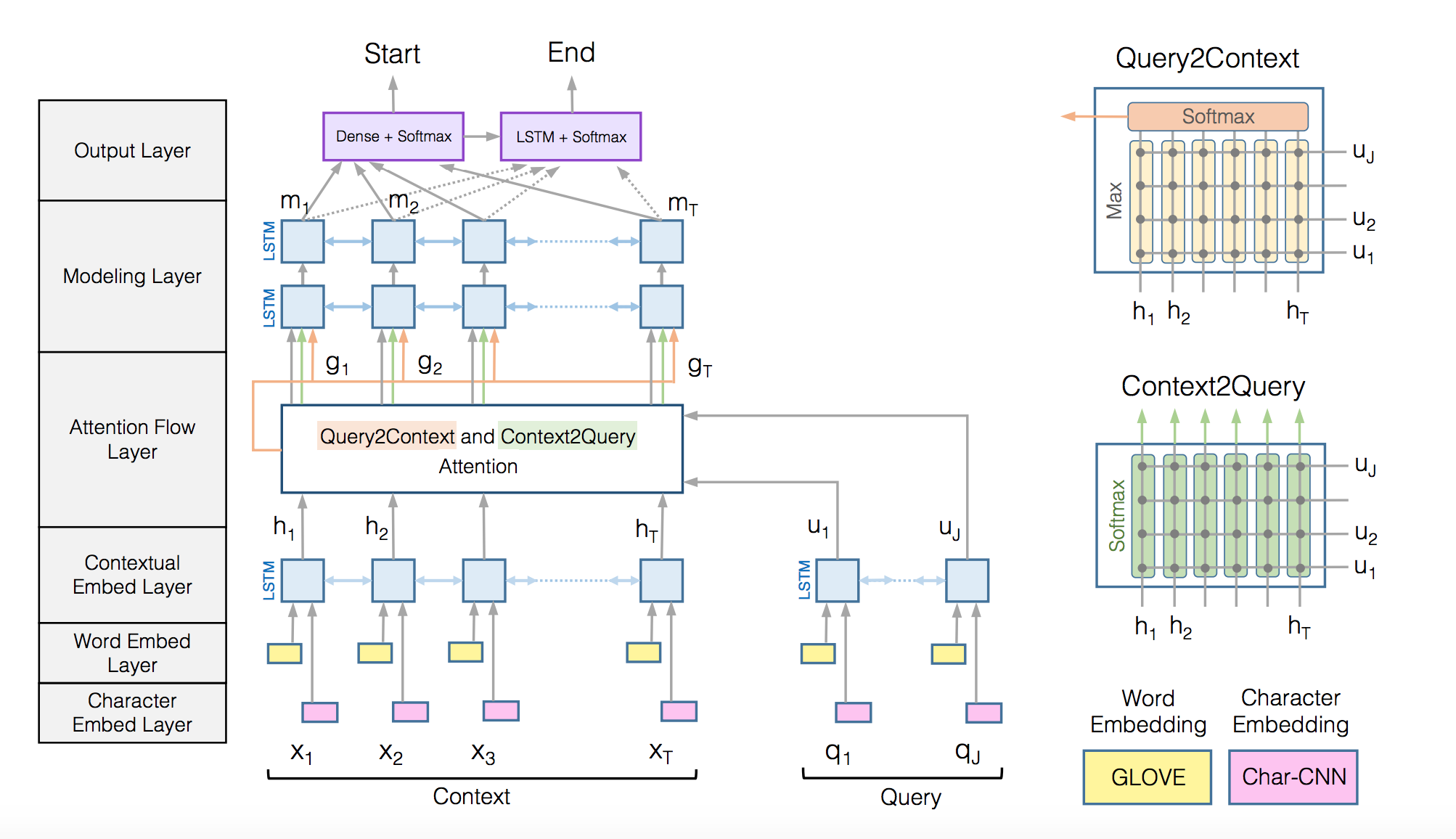

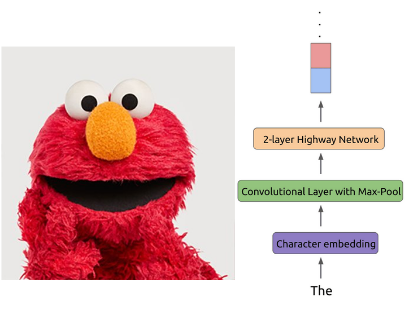

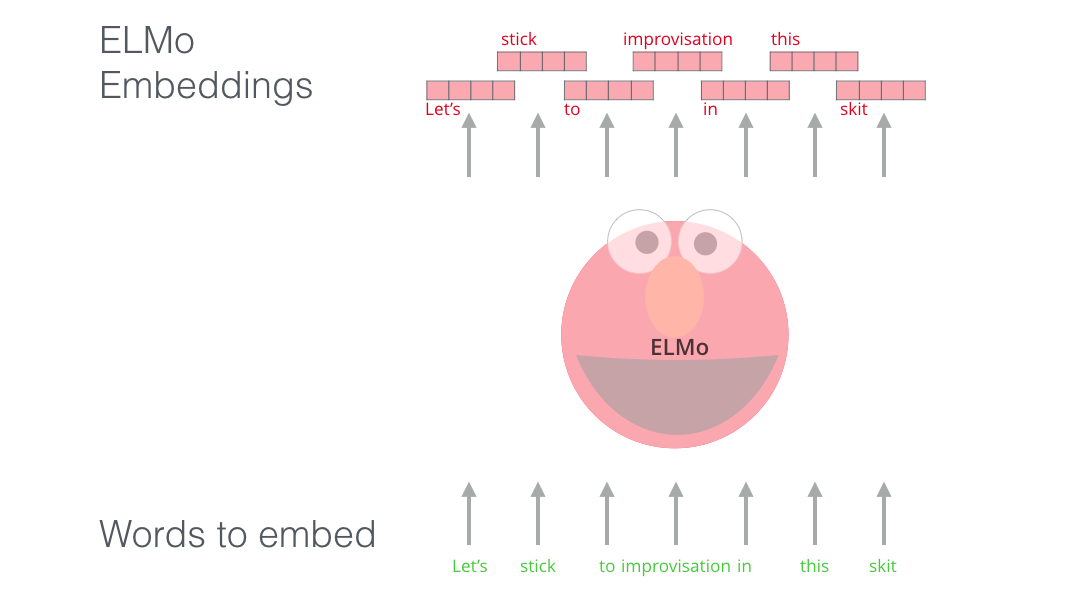

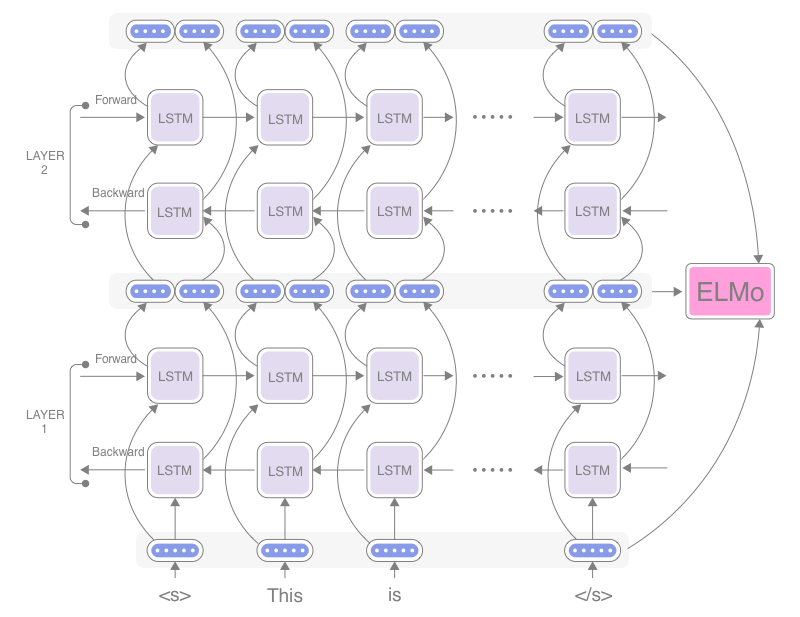

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

Matthew Peters on Twitter: "Our paper "Deep contextualized word representations" is now on Arxiv. ELMo representations from pre-trained language models set new SOTA for 6 diverse NLP tasks, SQuAD, SNLI, SRL, coref,

A no-frills guide to most Natural Language Processing Models — The LSTM Age — Seq2Seq, InferSent, Skip-Thought, Quick-Thought, ELMo, Flair, and ULMFiT | by Ilias Miraoui | Towards Data Science

Improving a Sentiment Analyzer using ELMo — Word Embeddings on Steroids – Real-World Natural Language Processing